🇨🇺🔥 6X BONUS ETECSA!Recarga hoy y tu familia en Cuba recibe 6X el saldo principal! Quedan solo 7 horas!Recargar ahora!etecsa.shop - Recarga Cubacel al instante

Companies are laying off experienced architects and doubling down on offshore coding teams — at the exact moment AI is automating the implementation work those teams do. The math says AI-augmented senior teams are cheaper and better than offshoring....

Every enterprise AI strategy deck I have seen in the past years contains the same promise: “We will build a RAG-based knowledge assistant that lets employees query our internal documents in natural language.” The board nods. The budget gets approved....

Z-ordering and Liquid Clustering both aim to improve Databricks query performance through data skipping. But when your data is skewed, one of them quietly becomes useless. A visual explanation of why — and how the Hilbert curve changes everything.

A vendor-neutral look at the architectural trade-offs that actually matter I have spent over 20 years building and optimising enterprise data warehouses, mostly on Teradata, and increasingly on Snowflake and Databricks. What I have observed repeatedl...

When Microsoft launched Fabric, it promised to unify analytics under one roof. Databricks, meanwhile, has been building its lakehouse platform for years. Both aim to be your central data platform — but they make fundamentally different bets on how mu...

I am running a poll on LinkedIn to find out which data platform migration paths are most common right now. If your company has migrated from Teradata, Oracle, SQL Server, or any other platform, I would appreciate your vote and your experience in the...

If you have spent any amount of time working with Teradata, you know that the Primary Index is one of the most important design decisions you make. It determines how data is distributed across AMPs and whether your joins are fast or slow. Choosing th...

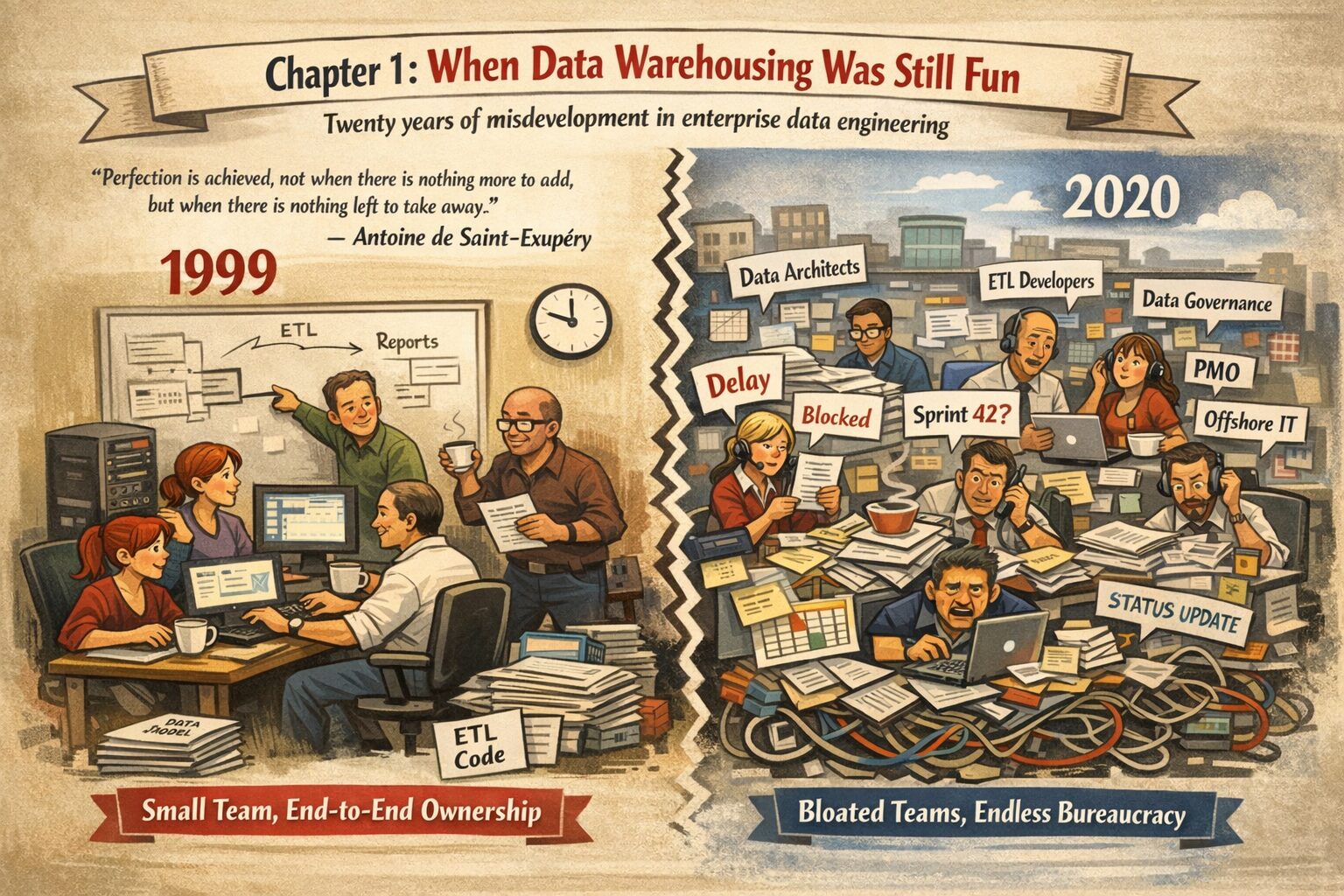

In 1999, five people could build a production data warehouse in twelve months. Today, the same company needs 120 people and 3 years to achieve the same outcome.I started writing about how we got here. This post looks back at a time when small teams w...

There was a time when a single team could build an entire data warehouse. Not a team of forty. Not a team of sixty distributed across three continents and coordinated by a project management office that had never seen an execution plan. A team of fiv...

SQL is dead. The future is MapReduce.Ok, we need SQL on Hadoop. Here's Hive.Hive is too slow. The future is Spark.OK, we need SQL on Spark. Here's Spark SQL.Notice a pattern?Teradata had this figured out in the 1980s. I wrote the full timeline.Read t...

Somewhere around 2020, the data world quietly arrived at a conclusion that Teradata engineers could have told you in 1984: SQL on a massively parallel architecture is a pretty good way to process large volumes of data. The path to get there was anyth...

If two companies migrate the same Teradata system to Snowflake, with the same data, indexes, statistics, and workloads, will they get the same bill?They will not. And the reason has less to do with warehouse sizing or SQL translation than most teams...

Migration success stories are everywhere. A quick search reveals case studies of companies that moved from Teradata to Snowflake and achieved faster queries, lower total cost of ownership, and happier analysts. Vendors publish them. Consultants refer...

Why data warehouse professionals have been doing “bronze, silver, gold” for over 20 years. If you have been working in data warehousing for any length of time, the first time you heard about the “medallion architecture” you probably had one reaction:...